I used to ignore privacy policies.

Like most people, I scrolled, clicked “Accept,” and moved on. Then one day I decided to actually read one. Thirty minutes later, I realized something uncomfortable — the document didn’t feel written for me. It felt written to protect the company.

That’s when I started looking for patterns.

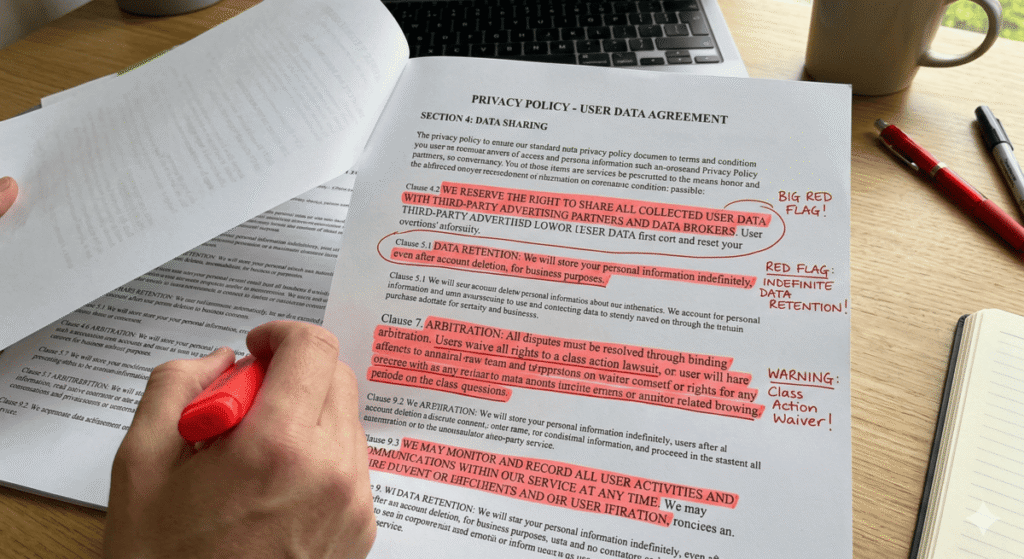

Now, every time I open a privacy policy, I don’t read it like a novel. I scan it like an investigator. I look for specific red flags that reveal how a company really handles data.

Here’s exactly how I do it.

First, I Search for Third-Party Sharing

I immediately press Ctrl+F and type:

- “third”

- “partner”

- “affiliate”

- “service provider”

If I see phrases like “trusted partners” without specific names, I slow down.

When a company refuses to clearly identify who receives user data, I treat that as a transparency issue. Clear policies name categories or companies. Vague ones leave room for expansion.

That flexibility usually benefits the company — not the user.

Then I Check Data Retention

Next, I search for “retain” or “retention.”

If I read something like:

“We retain your data as long as necessary.”

I ask: Necessary for what?

If the policy doesn’t define timeframes, I assume the company keeps the data longer than I expect.

Under frameworks like the General Data Protection Regulation, companies must justify retention periods. When I don’t see clear limits, I see risk.

I Look at How They Define “Personal Data”

This part surprises most people.

Some companies define personal data so broadly that it includes:

- Device identifiers

- Behavioral patterns

- Location signals

- Inferred interests

- Cross-device tracking

When I see an extremely wide definition, I understand something important: they want flexibility.

Broad definitions allow companies to collect more than users assume.

I Scan for Advertising Language

I search for:

- “Advertising”

- “Marketing”

- “Analytics”

- “Business purposes”

If I find references to “personalized advertising” or “marketing partners,” I know cross-platform tracking likely exists.

In California, users may have opt-out rights under the California Consumer Privacy Act. But companies only mention those rights clearly when regulations require them.

So I read that section carefully.

I Pay Attention to the Word “May”

This word matters more than people think.

When I read:

“We may share your information…”

I recognize flexibility.

“May” gives the company room to expand data use later without rewriting the policy. If I see that word repeatedly without boundaries, I flag it mentally.

I Check Whether User Rights Feel Practical

A policy can list rights without making them usable.

So I ask:

- Can I easily request deletion?

- Do they give a working email?

- Do they provide a web form?

- Do they specify response timelines?

The Federal Trade Commission emphasizes transparency in consumer disclosures. If I can’t figure out how to act on my rights in two minutes, I question the intent.

When I Don’t Want to Read 30 Pages

Sometimes I evaluate multiple apps in one day. Reading each privacy policy line by line doesn’t scale.

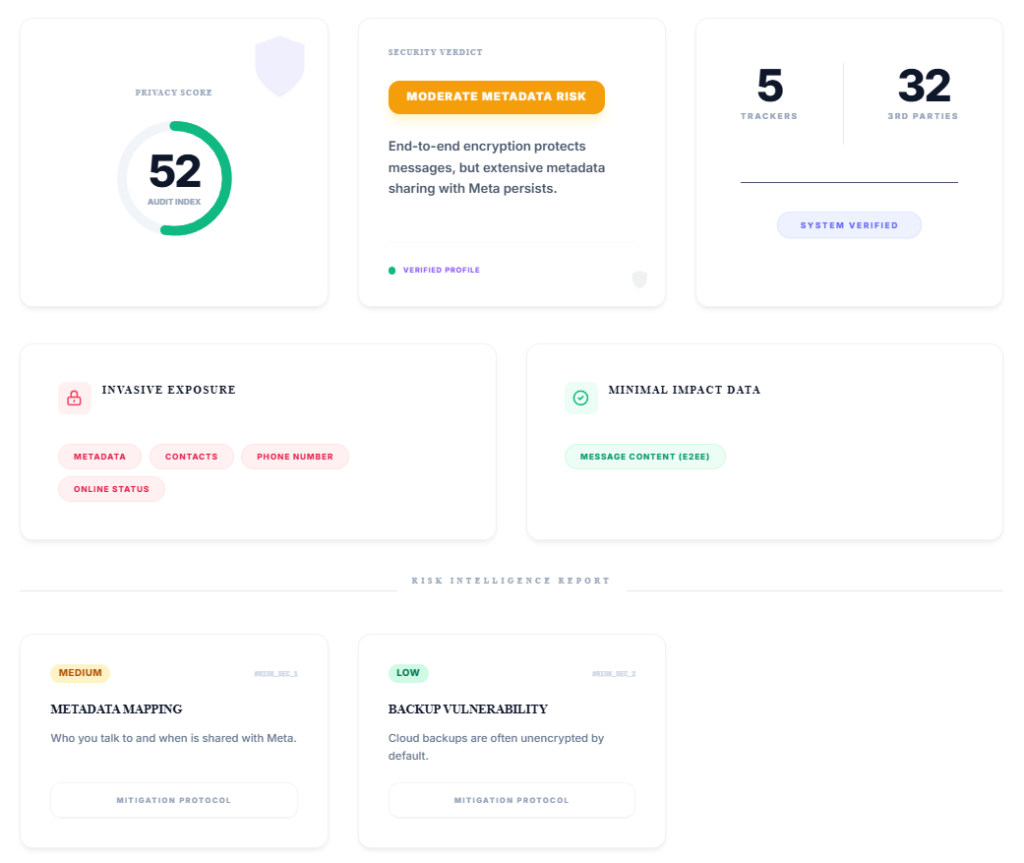

That’s why I built a Privacy Policy Analyzer for Pixel Defence.

Instead of summarizing blindly, it highlights:

- Vague third-party language

- Indefinite retention clauses

- Advertising disclosures

- Broad consent structures

It runs entirely in the browser. It doesn’t store or upload anything. It simply surfaces the exact patterns I look for manually.

You can try it here: Privacy Policy Analyzer

The Real Lesson I Learned

Privacy policies don’t usually lie. They strategically frame permissions.

Companies protect themselves with flexible wording. If you read casually, you miss it. If you read intentionally, patterns appear.

Now, before I click “Accept,” I ask:

- Do they clearly explain who receives my data?

- Do they define how long they keep it?

- Do they disclose advertising partnerships?

- Can I realistically opt out?

If the answers feel vague, I slow down.

Because once I grant permission, reversing it becomes harder than most people realize.

And that’s why I read differently now.

Frequently Asked Questions About Finding Red Flags in Privacy Policies

How do I quickly find red flags in a privacy policy?

I don’t read privacy policies from top to bottom. I search for specific trigger words like “third-party,” “retain,” “advertising,” and “partners.” Those sections usually reveal how the company shares and stores data. When I see vague language without clear limits, I treat it as a red flag.

What is the biggest red flag in a privacy policy?

The biggest red flag I see is undefined third-party sharing. If a company says it shares data with “trusted partners” but doesn’t clearly explain who they are or what they do with the data, I slow down immediately. Transparency matters more than promises.

Can a company legally sell my data?

Yes, depending on jurisdiction and disclosure. Many companies disclose data “sharing” for advertising purposes instead of using the word “sell.” Under regulations like the California Consumer Privacy Act, companies must provide opt-out rights in certain cases. But I always check the policy carefully to see how they define selling versus sharing.

How do I know if an app is sharing my data with advertisers?

I search for terms like “personalized advertising,” “marketing partners,” or “analytics providers.” If the policy references cross-device identifiers, tracking technologies, or ad optimization, I assume some level of advertiser data flow exists.

How long should companies keep my personal data?

Responsible policies define clear retention periods. If I see phrases like “we retain data as long as necessary” without timeframes, I consider that a risk signal. Regulations such as the General Data Protection Regulation require companies to justify retention periods, but enforcement varies.

Is there a faster way to analyze a privacy policy?

Yes. Instead of manually scanning dozens of pages, I use structured analysis. Our Privacy Policy Analyzer highlights vague sharing clauses, retention risks, and advertising disclosures automatically. It runs client-side and doesn’t store any data.

Why do privacy policies use vague language?

Companies write policies to maintain flexibility. Words like “may” and “from time to time” allow them to expand data practices without rewriting the document. When I see repeated flexibility without boundaries, I recognize strategic wording